Recursion can feel scary at first. Functions calling themselves. Problems splitting into smaller problems. And then strange equations called recurrences appear. But here is the good news. There is a shortcut. It is called the Master Method. And once you understand it, many complex problems become simple and even fun.

TLDR: The Master Method helps you quickly solve recurrence relations that appear in divide and conquer algorithms. You compare three parts of the recurrence and match them to one of three easy cases. Each case gives you the time complexity right away. Learn the pattern once, and you can solve many problems in minutes.

Let us break everything down slowly. No stress. No heavy math. Just clear steps.

Why Do We Even Need the Master Method?

When we study algorithms like Merge Sort or Binary Search, we often describe their running time using recurrence relations.

A recurrence relation looks like this:

T(n) = 2T(n/2) + n

That line means:

- You break a problem of size n into smaller pieces.

- You solve each smaller piece.

- You do some extra work to combine the answers.

But how fast is the algorithm overall? Is it O(n)? O(n log n)? O(n²)?

Solving recurrences by expanding them again and again takes time. It gets messy. The Master Method saves you from that.

The Standard Form You Must Know

The Master Method works for recurrences that look like this:

T(n) = aT(n/b) + f(n)

Three simple parts:

- a = number of subproblems

- n/b = size of each subproblem

- f(n) = extra work done outside recursion

That is it. If your recurrence matches this format, you can use the Master Method.

Now the key idea:

Compare f(n) with nlogba.

Yes. That tiny expression is the heart of everything. Do not panic. It is easier than it looks.

Step 1: Compute the Magic Value

First, calculate:

logba

Then compute:

nlogba

This is your comparison baseline.

Once you have it, compare it with f(n).

There are only three cases. Just three. That is why this method is awesome.

Case 1: f(n) Is Smaller

If:

f(n) = O(nlogba − ε)

That strange epsilon (ε) just means “a little smaller.”

In simple words:

If f(n) grows slower than nlogba, use Case 1.

The answer becomes:

T(n) = Θ(nlogba)

The recursive part dominates.

Example of Case 1

T(n) = 4T(n/2) + n

- a = 4

- b = 2

Compute:

log24 = 2

So baseline is:

n²

Compare:

f(n) = n

Clearly, n grows slower than n².

This is Case 1.

Final Answer: Θ(n²)

Done. No recursion tree needed.

Case 2: f(n) Is Equal

If:

f(n) = Θ(nlogba)

That means both parts grow at the same rate.

Then the answer becomes:

T(n) = Θ(nlogba log n)

You just add a log n factor.

Example of Case 2

T(n) = 2T(n/2) + n

- a = 2

- b = 2

Compute:

log22 = 1

Baseline:

n¹ = n

Compare:

f(n) = n

They match.

This is Case 2.

Final Answer: Θ(n log n)

This is why Merge Sort runs in n log n time.

Case 3: f(n) Is Larger

If:

f(n) = O(nlogba + ε)

In simple words:

If f(n) grows faster than the baseline, use Case 3.

Then:

T(n) = Θ(f(n))

The extra work dominates everything.

Example of Case 3

T(n) = 2T(n/2) + n²

- a = 2

- b = 2

Compute:

log22 = 1

Baseline:

n

Compare:

f(n) = n²

n² grows faster than n.

This is Case 3.

Final Answer: Θ(n²)

Simple.

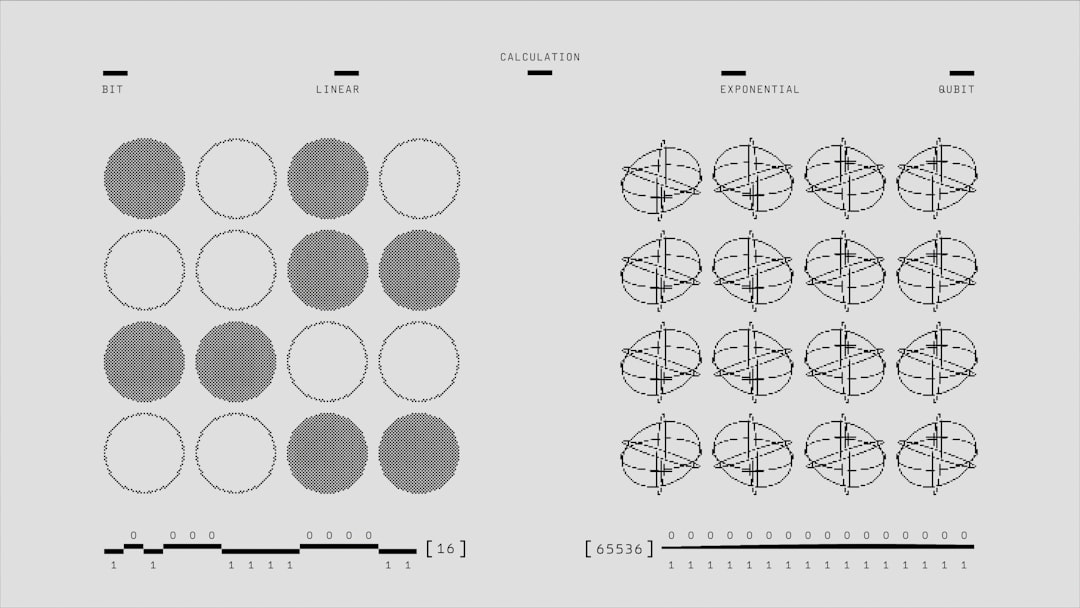

A Visual Way to Think About It

Imagine building a recursion tree.

Each level does some work.

- If the bottom levels dominate, it is Case 1.

- If all levels contribute equally, it is Case 2.

- If the top level dominates, it is Case 3.

This mental model helps you remember the logic instead of memorizing formulas.

Image not found in postmetaCommon Mistakes Students Make

Let us save you from pain.

1. Forgetting the Standard Form

If your recurrence is not in the form T(n) = aT(n/b) + f(n), rewrite it first.

2. Wrong Log Calculation

Be careful with log bases. logba means “what power of b equals a?”

3. Ignoring Growth Rates

We compare growth rates, not exact numbers. Constants do not matter in Big-O.

4. Using Master Method Everywhere

It does not work for all recurrences. For example:

T(n) = T(n − 1) + n

This is not divide and conquer. So Master Method will not help.

Step-by-Step Cheat Sheet

When you see a recurrence in an exam, follow this checklist:

- Write it in standard form.

- Identify a, b, and f(n).

- Compute logba.

- Calculate baseline nlogba.

- Compare f(n) with the baseline.

- Pick Case 1, 2, or 3.

- Write the final Θ result.

That is your full system.

Why the Master Method Is Powerful

Think about what it does.

It compresses a long recursive expansion into a 30-second process.

It turns this:

- Drawing recursion trees

- Summing geometric series

- Long algebra steps

Into this:

- One log calculation

- One comparison

- One final answer

That is efficiency.

Practice Makes It Easy

The first few problems may feel slow.

That is normal.

After 10 to 15 examples, your brain sees patterns instantly.

You will look at:

T(n) = 8T(n/2) + n³

And immediately think:

- a = 8

- b = 2

- log28 = 3

- baseline = n³

- Matches Case 2

- Answer: n³ log n

Fast. Clean. Confident.

Big Picture Understanding

The Master Method teaches something deeper.

It shows how algorithms scale.

It shows how dividing problems affects performance.

It shows that small differences in growth rates matter a lot.

For large inputs:

- n grows slower than n log n

- n log n grows slower than n²

- n² grows slower than n³

The Master Method is really about comparing these growth speeds.

Final Thoughts

Recurrence relations are not monsters.

They are patterns.

The Master Method is your pattern detector.

Remember:

- Write the standard form.

- Compute logba.

- Compare growth rates.

- Pick one of three cases.

That is all.

Once you master this tool, divide and conquer algorithms feel easier. Exams feel lighter. And algorithm analysis becomes logical instead of scary.

Now grab a few recurrence problems and try them.

You might even start enjoying them.

Yes. Recurrence solving can actually be fun.

I’m Sophia, a front-end developer with a passion for JavaScript frameworks. I enjoy sharing tips and tricks for modern web development.